JVM bytecode sucks

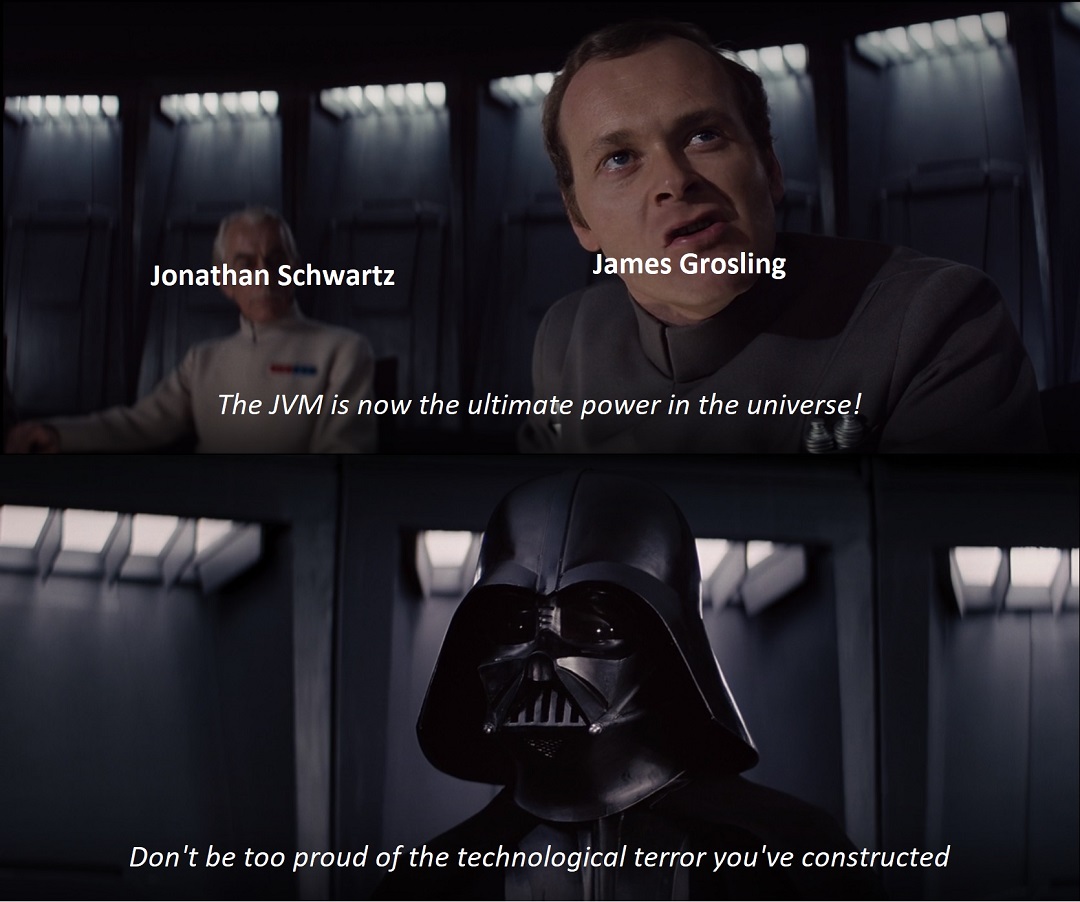

Pub. 2020 Jun 6I have a pretty embarrassing guilty pleasure: I read HackerNews while I eat dinner. It's not usually that bad, but last night I read a particularly juicy thread on a blog post about implementing a toy JVM. I've already done a little bit of JVM bytecode reading and interpretting myself, but let's be honest, as an HN reader I would have only skimmed the first couple paragraphs anyways. The HN comments section is where the real good stuff starts:

The opening line confuses me... The JVM is one of the fastest, well established, well documented, widely deployed platform in the world. Hundreds of languages run on it, quite quickly.

A downvoted comments comparing JVMs to "steam engines", to which there is a reply:

"Steam Engine" isn't what I'd call the JVM; it's still a remarkable piece of software driving hundreds of thousands business apps with a strong focus on long-term maintenance, excellent mindshare, and really very good performance for what it does"

and

I agree all that about jvm. It had considerable impact on industry and it is nice piece of software.

I'm not going to argue about any of these. But they did make me want to write a thing dunking on JVMs and the JVM bytecode spec. During all of this I'm going to be specifically talking about the JVM 8 spec, but as far as I've skimmed the rest of them have the same issues.

.class files are too big:

Any given class file is exorbitantly large for what it does. I'm going to be listing numbers of wasted bytes relative to what I would consider a reasonably enterprise-y Java project without any dependencies: 12k class files totaling around 45MB total. The totals below are very rough but shouldn't seem unreasonable.

Pull up any random class file in a hex editor and you'll see that on average more than half the file is strings and other metadata, not actual instructions. If you make debug builds there are things in there like variable names, but these are usually the vast minority. The main contents of that section of the class file are full class names (e.g. Lit/unimi/dsi/fastutil/doubles/Double2DoubleAVLTreeMap), method names (get, put, values), and method signatures (()Lit/unimi/dsi/fastutil/doubles/DoubleCollection;). What this means is that not only will every class file declare its own full class name string, so too must every single class that wants to invoke anything that so much as touches it (uncompressed too!). Assuming an average class signature of around 50 chars and it's being referenced on average in a dozen other class files, we're at 6.86MB of purely repeated bytes over the whole of the project. Note that this number may be a little high since (in Java anyways) lots of anonymous inner classes end up getting created that don't have as many imports, but still.

As part of the JVM there are also these things called "attributes" which are like tags that you can associate with fields, methods, or for the whole class. Each one of these tags have a type. These are NOT implemented as an id, but as an index into the constant pool to an item that contains the full text string of the attribute (e.g. SourceFile or LocalVariableTable). There are around 5 or 6 of these that you'd expect to find in any given class file, and assuming each of these averages 10 or 15 bytes, we're wasting around 0.82MB when we could be using a 1 byte tag.

Just from those two things alone you're looking at an estimated duplication rate of around 17%. And just in case it comes up, .jar files aren't an excuse!

Nothing is where you want it

Basically everything you want is in the constant pool. In order to read any meaningful data out of a class file first things first you need is to be able to parse the constant pool.

You want to know what numbers are being added to your variable? No immediates to be found here, go fetch the numbers out of the constant pool!

You want to know the full name of the class you're parsing? Follow that index to the constant pool. Oh wait, no, that's a CONSTANT_Class entry. What does the CONSTANT_Class entry contain? It contains a single index, the index of the actual UTF8 string you wanted.... elsewhere in the constant pool.

You want to know the name of the method that you're about to parse? Check the constant pool. You'll get a CONSTANT_Methodref, which will redirect you to 2 other strings, the actual method string and the method's signature (in textual format), both indexes somewhere else in the constant pool. This one is made all the more silly because it's not only identical to CONSTANT_Fieldref and CONSTANT_InterfaceMethodref, but since each entry in the pool has no size parameter there's actually no reason at all to break them apart.

Don't go getting CONSTANT_MethodHandle and CONSTANT_Methodref mixed up! And don't even get me started on all that invokedynamic stuff...

When reading out attributes (which you have to do to do anything interesting, like reading the actual executable bytecode in a method for instance) in order to tell what type the attribute is you have to look up its name in the constant pool, so hold your strcmp's close. Additionally there are attributes that can nest other attributes like the Code attribute. At least for these attribute things they decided to place the length of the attribute in the header. Their format is several times over-inflated but I guess at least they owned it.

Indexes are hard

There are 2 types of indexes that are of primary importance in class files: constant pool indexes ("what is the name of the method?") and bytecode indexes ("what instruction does this goto instruction take me to?"). The constant pool indexes are 1-based and the bytecode indexes are 0-based. The constant pool indexes are index-based ("1st pool entry, 2nd pool entry") while the bytecode indexes are byte-based ("go down 5 bytes to get to the next instruction").

That second point becomes a larger issue when you want to parse full class files and you have to go to the constant pool for every last piece of information. You end up having to build your own jump table in order to not get eaten alive by traversing the constant pool structure over and over and over.

Not much more to say about that, other than that I don't think this is mentioned anywhere in the spec. Also, wtf.

Bad instructions

I know a lot of people are all about rampant types in their bytecode and their intrinsics and such, but you'll never convince me that there's a value to having typed loads (iload, fload) and typed stores (istore, fstore) while also at the same time requiring that both need to be statically verified that they can only take their appropriate types before running. How does it make sense that iload needs to only only be used on integer types in the local variable table when the JVM has to verify the types match anyways? Also, I guess stores are important enough to need types specified, but not ldc?

The opcode table is divided into sections, so for example opcodes 0xa7 - 0xb1 are all going to be instructions involving control flow in some way. This by itself is nice I guess in a philosophical way, but at this point the opcode list is packed tight. Everyone knows that optimization has to be taken care of at both the front- and back-ends, but the JVM team has essentially decided that all improvements over any metric in a running Java program is now the sole responsibility of the back-end. If you've ever looked at the JIT'd assembly dump of methods and noticed how the JVM seemingly can't vectorize anything except for the most trivial of code, this is why.

JVMTI

Using JVMTI you can retrieve almost any information about a class that you might have gleaned from parsing its class file... except for one thing: the exception table. Not having read the IntelliJ or Eclipse source, I'd wager that not having a way to access this is probably the single biggest responsible thing contributing to the terrible performance of Java debuggers when stepping in/over/out. Not making an excuse for their perf issues for local debugging, but I'm not really sure how you could make it any better when doing remote debugging.

I don't necessarily have an issue with not being able to access the stack since I do want the JIT to be able to move stuff around. But the only reason this is saved is because of the asymmetry between jsr and ret instructions, which itself is kinda dumb.

So yeah

This list is only touching on specific issues with the spec, and not the ones that they owned up to in the spec (like the whole doubles-take-2-slots nonsense). Also not mentioned are any of the myriad issues that one might have at the conceptual level about JVMs. Like, why does the spec spend 160-odd pages on bytecode verification but then spend just one sentence to say that garbage collection is implementation defined? I'm pretty sure that by the 90s we had all had enough experience with computers to come to the understanding that graphs were terrible ways of organizing anything, so why is it that methods and classes are referenced with what are basically hyperlinks and types are organized into trees? The list goes on and on.

These problems are the ones that I found when I played with JVMs for a couple of weekends, so there's probably more out there. And these things listed above aren't even bad in hindsight. They were bad decisions on the day they were made.